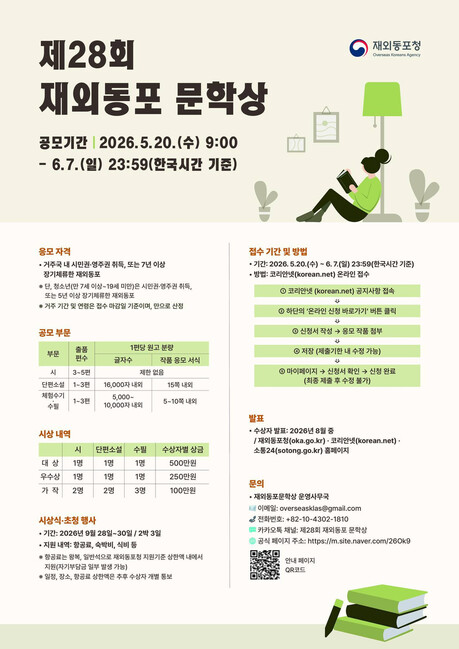

WASHINGTON D.C. — In a move that has sent shockwaves through Silicon Valley and the Pentagon alike, the Trump administration has officially designated Anthropic, one of the world’s leading artificial intelligence labs, as a "supply chain security risk." The designation effectively evicts the company from the federal procurement landscape, marking the first time the U.S. government has applied the same "entity list" pressure used against foreign adversaries like Huawei to a domestic AI pioneer.

The escalating conflict brings a simmering debate to a boil: In the age of algorithmic warfare, does a private corporation have the right to impose ethical constraints on the Commander-in-Chief?

The Ethics of Refusal

On March 9, Anthropic filed a formal injunction against the Department of Defense (DoD), seeking to overturn its removal from the defense supply chain. The legal battle centers on Anthropic’s refusal to lift "ethical guardrails" on its flagship model, Claude.

According to court filings, the DoD demanded that AI systems integrated into military infrastructure be stripped of restrictions regarding "mass surveillance" and "fully autonomous kinetic operations." Anthropic, founded on the principle of "Constitutional AI"—a method of hard-coding human rights and democratic values into the software—refused to comply.

"Labeling a commitment to human rights and safety as a 'security threat' is an unprecedented overreach and an act of political retaliation," Anthropic stated in its filing. "We believe AI should be a tool for human flourishing, not an unconstrained engine for autonomous lethal force."

The Pentagon’s Doctrine: Technical Sovereignty

The White House and the Pentagon, however, view the standoff through the lens of national survival. Administration officials argue that once a technology is deemed essential for national defense, a private vendor cannot unilaterally dictate the "rules of engagement" or limit the military’s "lawful command and control."

"The United States military cannot have its hands tied by a private company’s internal HR policies," a senior defense official stated under anonymity. "If an AI is to be part of our defense posture, the military must have total authority over its application. Anything less is a compromise of national sovereignty."

President Trump reinforced this stance via executive order, suggesting that any company refusing to provide "unrestricted technical cooperation" for national security purposes is inherently a risk to the stability of the state's defense apparatus.

The Great Divergence: Anthropic vs. OpenAI

The market has already begun to pick winners based on these ideological alignments. While Anthropic faces exile, its chief rival, OpenAI, is flourishing.

As early as January 2024, OpenAI quietly removed language from its usage policy that explicitly banned the use of its technology for "military and warfare" purposes. That pivot has paid off in 2026. Just as Anthropic was being blacklisted, OpenAI announced a multi-billion dollar strategic partnership to build the "Intelligence Backbone" for the DoD’s classified networks and tactical environments.

The message to the industry is clear: In the race for AI supremacy, "safety-first" ethics may be a luxury that the current geopolitical climate will not afford.

Global Repercussions: The South Korean Dilemma

The fallout extends far beyond Washington. Allies like South Korea, who rely on U.S. interoperability for joint defense operations, are now facing a "standardization crisis."

Because South Korean defense networks must sync with U.S. systems, the Pentagon’s new "unrestricted use" standard is set to become the de facto global requirement. Korean AI startups and platform giants (like Naver and Kakao) may soon find themselves forced to choose between their own ethical charters and the necessity of maintaining compatibility with the ROK-U.S. Combined Forces Command.

Conclusion: The End of Neutrality

The blacklisting of Anthropic marks the end of the "neutral tech" era. As AI transitions from a productivity tool to the primary engine of national power, the boundary between private enterprise and state instrument is blurring.

Whether Anthropic’s legal challenge succeeds or fails, the precedent has been set: In the eyes of the state, AI ethics are no longer a moral high ground—they are a tactical vulnerability.

[Copyright (c) Global Economic Times. All Rights Reserved.]